On November 18, New York City mayor Bill de Blasio announced that the city’s schools would close until further notice. Students returned to remote learning, while restaurants and bars remain open—even indoor dining is permitted.

This closure came because the city had passed a 3% positivity rate. 3% of all tests conducted in the city in the week leading up to November 18 had returned positive results, indicating to the NYC Department of Health and de Blasio that COVID-19 is spreading rampantly in the community. As a result—and as de Blasio had promised in September—the city’s schools had to close.

But that 3% value is less straightforward than it first appears. In closing schools, de Blasio cited data collected by the NYC Department of Health, which counts new test results on the day that they are collected. The state of New York, however, which controls dining bans and other restrictions, counts new test results on the day that they are reported. Here’s how Joseph Goldstein and Jesse McKinley explain this discrepancy in the New York Times:

So if an infected person goes to a clinic to have his nose swabbed on Monday, that sample is often delivered to a laboratory where it is tested. If those results are reported to the health authorities on Wednesday, the state and city would record it differently. The state would include it with Wednesday’s tally of new cases, while the city would add it to Monday’s column.

Also, the state reports tests in units of test encounters while the city (appears to) report in units of people. (See my September 6 issue for details on these unit differences.) Also, the state includes antigen tests in its count, while the city only includes PCR tests. These small differences in test reporting methodologies can make a sizeable dent in the day-to-day numbers. On the day that Goldstein and McKinley’s piece was published, for example, the city reported an average test positivity rate of 3.09% while the state reported a rate of 2.54% for the city.

Meanwhile, some public health experts have questioned why a test positivity rate would be even used in isolation. The CDC recommends using a combination of test positivity, new cases, and a school’s ability to mitigate virus spread through contact tracing and other efforts. But NYC became fixated on that 3% benchmark; when the benchmark was hit, the schools closed.

Overall, the NYC schools discrepancy is indicative of an American education system that is still not collecting adequate data on how COVID-19 is impacting classrooms—much less using these data in a consistent manner. Science Magazine’s Gretchen Vogel and Jennifer Couzin-Frankel describe how a lack of data has made it difficult for school administrators and public health researchers alike to see where outbreaks are occurring. Conflicting scientific evidence on how children transmit the coronavirus hasn’t helped, either.

Emily Oster, a Brown University economist whom I interviewed back in October, continues to run one of a few comprehensive data sources on COVID-19 in schools. Oster has faced criticism for her dashboard’s failure to include a diverse survey population and for speaking as an expert on school transmission when she doesn’t have a background in epidemiology. Still, CDC Director Robert Redfield recently cited this dashboard at a White House Coronavirus Task Force briefing—demonstrating the need for more complete and trustworthy data on the topic. The COVID Monitor, another volunteer dashboard led by former Florida official Rebekah Jones, covers over 240,000 K-12 schools but does not include testing or enrollment numbers.

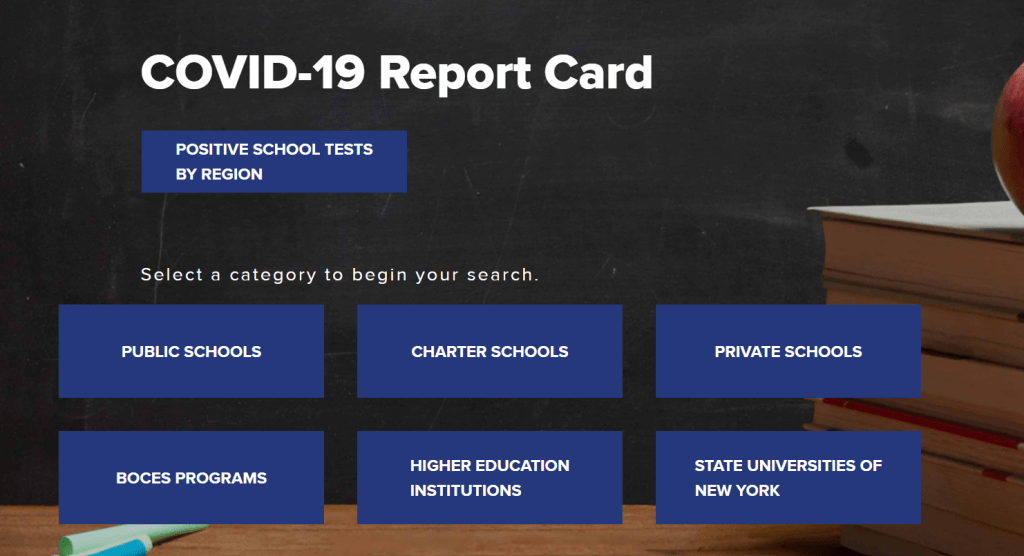

For me, at least, the NYC schools discrepancy has been a reminder to get back on the schools beat. Next week, I will be conducting a review of every state’s COVID-19 school data—including which metrics are reported and what benchmarks the state uses to declare schools open or closed. If there are other specific questions you’d like me to consider, shoot me an email or let me know in the comments.

Leave a comment